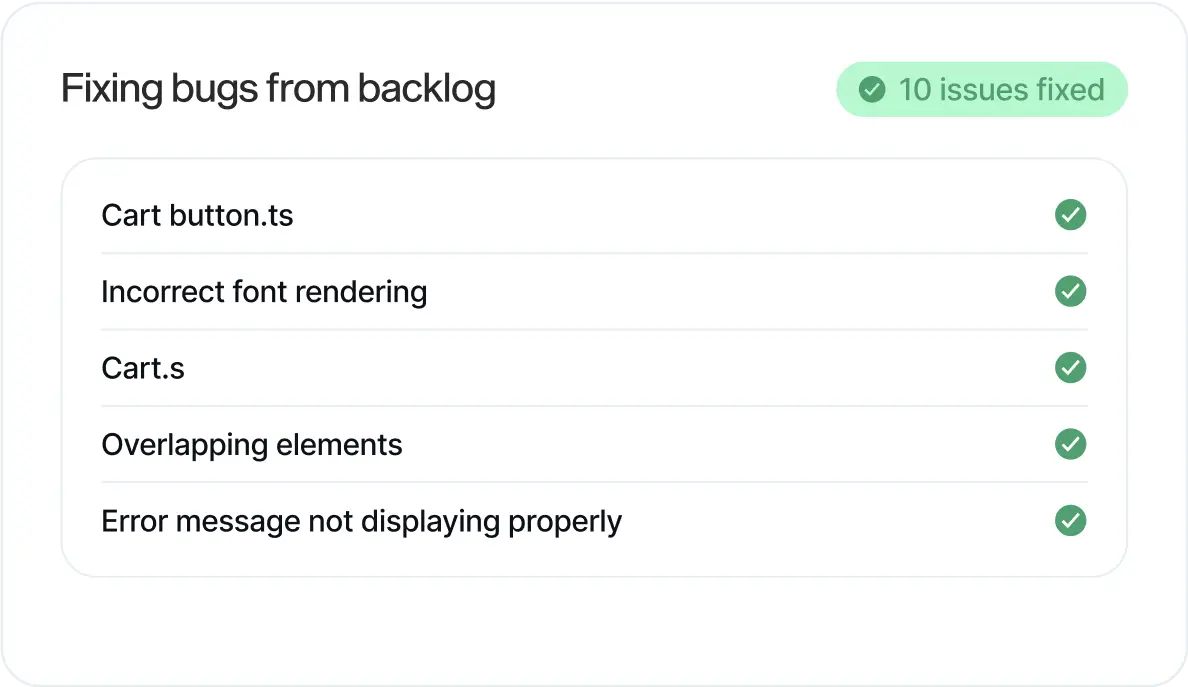

Predictive maintenance for your software

Catch issues before they turn into incidents.

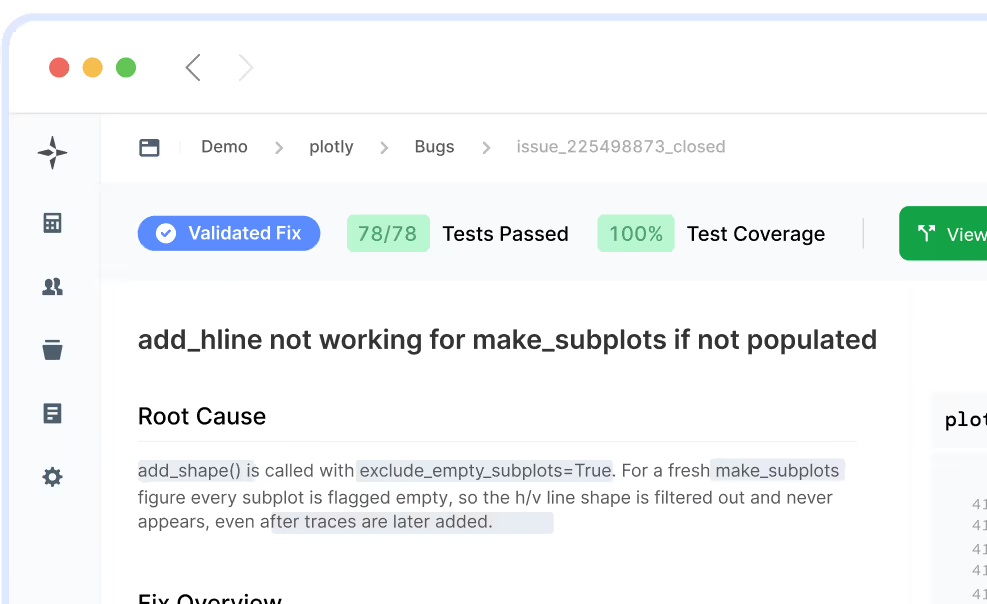

Production incidents cost customer trust, engineering time, and momentum. AI-accelerated development is making it worse, more code, shipped faster, more issues slipping past existing tools. LogicStar finds them early and surfaces the ones that matter, before they become incidents.

Try out our Demo